The award-winning WIRED UK Podcast with James Temperton and the rest of the team. Listen every week for the an informed and entertaining rundown of latest technology, science, business and culture news. New episodes every Friday.

…

continue reading

Inhalt bereitgestellt von LessWrong. Alle Podcast-Inhalte, einschließlich Episoden, Grafiken und Podcast-Beschreibungen, werden direkt von LessWrong oder seinem Podcast-Plattformpartner hochgeladen und bereitgestellt. Wenn Sie glauben, dass jemand Ihr urheberrechtlich geschütztes Werk ohne Ihre Erlaubnis nutzt, können Sie dem hier beschriebenen Verfahren folgen https://de.player.fm/legal.

Player FM - Podcast-App

Gehen Sie mit der App Player FM offline!

Gehen Sie mit der App Player FM offline!

“Anthropic is (probably) not meeting its RSP security commitments” by habryka

Manage episode 520491236 series 3364760

Inhalt bereitgestellt von LessWrong. Alle Podcast-Inhalte, einschließlich Episoden, Grafiken und Podcast-Beschreibungen, werden direkt von LessWrong oder seinem Podcast-Plattformpartner hochgeladen und bereitgestellt. Wenn Sie glauben, dass jemand Ihr urheberrechtlich geschütztes Werk ohne Ihre Erlaubnis nutzt, können Sie dem hier beschriebenen Verfahren folgen https://de.player.fm/legal.

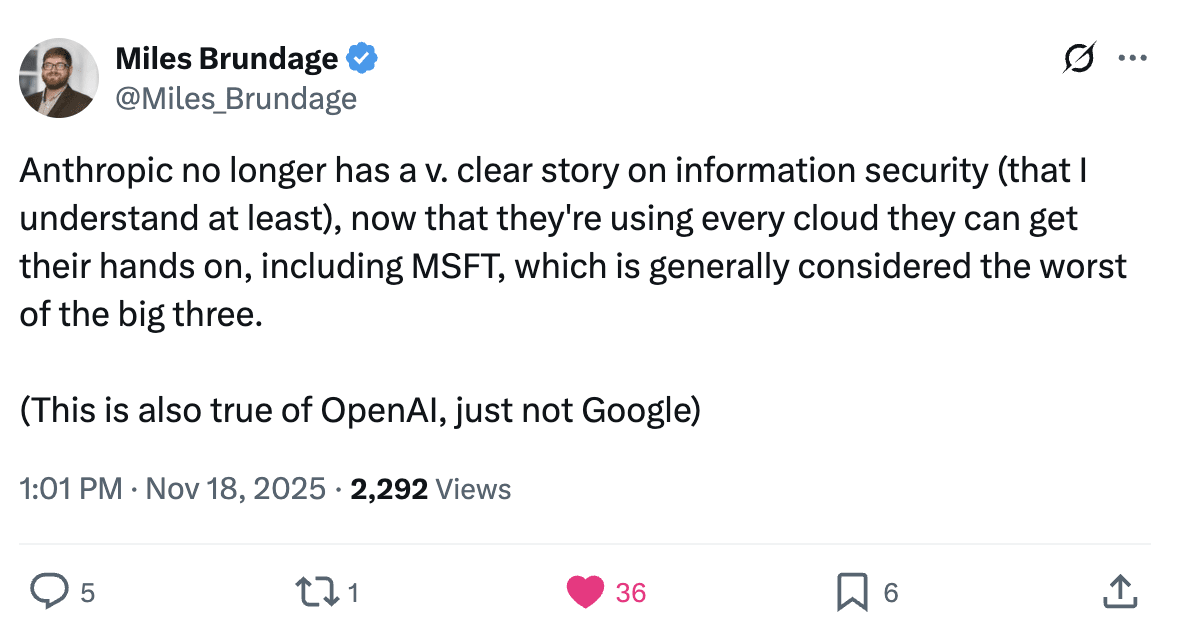

TLDR: An AI company's model weight security is at most as good as its compute providers' security. Anthropic has committed (with a bit of ambiguity, but IMO not that much ambiguity) to be robust to attacks from corporate espionage teams at companies where it hosts its weights. Anthropic seems unlikely to be robust to those attacks. Hence they are in violation of their RSP.

Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

From the Anthropic RSP:

When a model must meet the ASL-3 Security Standard, we will evaluate whether the measures we have implemented make us highly protected against most attackers’ attempts at stealing model weights.

We consider the following groups in scope: hacktivists, criminal hacker groups, organized cybercrime groups, terrorist organizations, corporate espionage teams, internal employees, and state-sponsored programs that use broad-based and non-targeted techniques (i.e., not novel attack chains).

[...]

We will implement robust controls to mitigate basic insider risk, but consider mitigating risks from sophisticated or state-compromised insiders to be out of scope for ASL-3. We define “basic insider risk” as risk from an insider who does not have persistent or time-limited [...]

---

Outline:

(00:37) Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

(03:40) Claude weights that are covered by ASL-3 security requirements are shipped to many Amazon, Google, and Microsoft data centers

(04:55) This means given executive buy-in by a high-level Amazon, Microsoft or Google executive, their corporate espionage team would have virtually unlimited physical access to Claude inference machines that host copies of the weights

(05:36) With unlimited physical access, a competent corporate espionage team at Amazon, Microsoft or Google could extract weights from an inference machine, without too much difficulty

(06:18) Given all of the above, this means Anthropic is in violation of its most recent RSP

(07:05) Postscript

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/zumPKp3zPDGsppFcF/anthropic-is-probably-not-meeting-its-rsp-security

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

From the Anthropic RSP:

When a model must meet the ASL-3 Security Standard, we will evaluate whether the measures we have implemented make us highly protected against most attackers’ attempts at stealing model weights.

We consider the following groups in scope: hacktivists, criminal hacker groups, organized cybercrime groups, terrorist organizations, corporate espionage teams, internal employees, and state-sponsored programs that use broad-based and non-targeted techniques (i.e., not novel attack chains).

[...]

We will implement robust controls to mitigate basic insider risk, but consider mitigating risks from sophisticated or state-compromised insiders to be out of scope for ASL-3. We define “basic insider risk” as risk from an insider who does not have persistent or time-limited [...]

---

Outline:

(00:37) Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

(03:40) Claude weights that are covered by ASL-3 security requirements are shipped to many Amazon, Google, and Microsoft data centers

(04:55) This means given executive buy-in by a high-level Amazon, Microsoft or Google executive, their corporate espionage team would have virtually unlimited physical access to Claude inference machines that host copies of the weights

(05:36) With unlimited physical access, a competent corporate espionage team at Amazon, Microsoft or Google could extract weights from an inference machine, without too much difficulty

(06:18) Given all of the above, this means Anthropic is in violation of its most recent RSP

(07:05) Postscript

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/zumPKp3zPDGsppFcF/anthropic-is-probably-not-meeting-its-rsp-security

---

Narrated by TYPE III AUDIO.

---

679 Episoden

Manage episode 520491236 series 3364760

Inhalt bereitgestellt von LessWrong. Alle Podcast-Inhalte, einschließlich Episoden, Grafiken und Podcast-Beschreibungen, werden direkt von LessWrong oder seinem Podcast-Plattformpartner hochgeladen und bereitgestellt. Wenn Sie glauben, dass jemand Ihr urheberrechtlich geschütztes Werk ohne Ihre Erlaubnis nutzt, können Sie dem hier beschriebenen Verfahren folgen https://de.player.fm/legal.

TLDR: An AI company's model weight security is at most as good as its compute providers' security. Anthropic has committed (with a bit of ambiguity, but IMO not that much ambiguity) to be robust to attacks from corporate espionage teams at companies where it hosts its weights. Anthropic seems unlikely to be robust to those attacks. Hence they are in violation of their RSP.

Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

From the Anthropic RSP:

When a model must meet the ASL-3 Security Standard, we will evaluate whether the measures we have implemented make us highly protected against most attackers’ attempts at stealing model weights.

We consider the following groups in scope: hacktivists, criminal hacker groups, organized cybercrime groups, terrorist organizations, corporate espionage teams, internal employees, and state-sponsored programs that use broad-based and non-targeted techniques (i.e., not novel attack chains).

[...]

We will implement robust controls to mitigate basic insider risk, but consider mitigating risks from sophisticated or state-compromised insiders to be out of scope for ASL-3. We define “basic insider risk” as risk from an insider who does not have persistent or time-limited [...]

---

Outline:

(00:37) Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

(03:40) Claude weights that are covered by ASL-3 security requirements are shipped to many Amazon, Google, and Microsoft data centers

(04:55) This means given executive buy-in by a high-level Amazon, Microsoft or Google executive, their corporate espionage team would have virtually unlimited physical access to Claude inference machines that host copies of the weights

(05:36) With unlimited physical access, a competent corporate espionage team at Amazon, Microsoft or Google could extract weights from an inference machine, without too much difficulty

(06:18) Given all of the above, this means Anthropic is in violation of its most recent RSP

(07:05) Postscript

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/zumPKp3zPDGsppFcF/anthropic-is-probably-not-meeting-its-rsp-security

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

From the Anthropic RSP:

When a model must meet the ASL-3 Security Standard, we will evaluate whether the measures we have implemented make us highly protected against most attackers’ attempts at stealing model weights.

We consider the following groups in scope: hacktivists, criminal hacker groups, organized cybercrime groups, terrorist organizations, corporate espionage teams, internal employees, and state-sponsored programs that use broad-based and non-targeted techniques (i.e., not novel attack chains).

[...]

We will implement robust controls to mitigate basic insider risk, but consider mitigating risks from sophisticated or state-compromised insiders to be out of scope for ASL-3. We define “basic insider risk” as risk from an insider who does not have persistent or time-limited [...]

---

Outline:

(00:37) Anthropic is committed to being robust to attacks from corporate espionage teams (which includes corporate espionage teams at Google, Microsoft and Amazon)

(03:40) Claude weights that are covered by ASL-3 security requirements are shipped to many Amazon, Google, and Microsoft data centers

(04:55) This means given executive buy-in by a high-level Amazon, Microsoft or Google executive, their corporate espionage team would have virtually unlimited physical access to Claude inference machines that host copies of the weights

(05:36) With unlimited physical access, a competent corporate espionage team at Amazon, Microsoft or Google could extract weights from an inference machine, without too much difficulty

(06:18) Given all of the above, this means Anthropic is in violation of its most recent RSP

(07:05) Postscript

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/zumPKp3zPDGsppFcF/anthropic-is-probably-not-meeting-its-rsp-security

---

Narrated by TYPE III AUDIO.

---

679 Episoden

Alle episoder

×Willkommen auf Player FM!

Player FM scannt gerade das Web nach Podcasts mit hoher Qualität, die du genießen kannst. Es ist die beste Podcast-App und funktioniert auf Android, iPhone und im Web. Melde dich an, um Abos geräteübergreifend zu synchronisieren.